Welcome to combo’s documentation!¶

Deployment & Documentation & Stats

Build Status & Coverage & Maintainability & License

combo is a comprehensive Python toolbox for combining machine learning (ML) models and scores. Model combination can be considered as a subtask of ensemble learning, and has been widely used in real-world tasks and data science competitions like Kaggle [ABK07]. combo has been used/introduced in various research works since its inception [ARPN20, AZNL19].

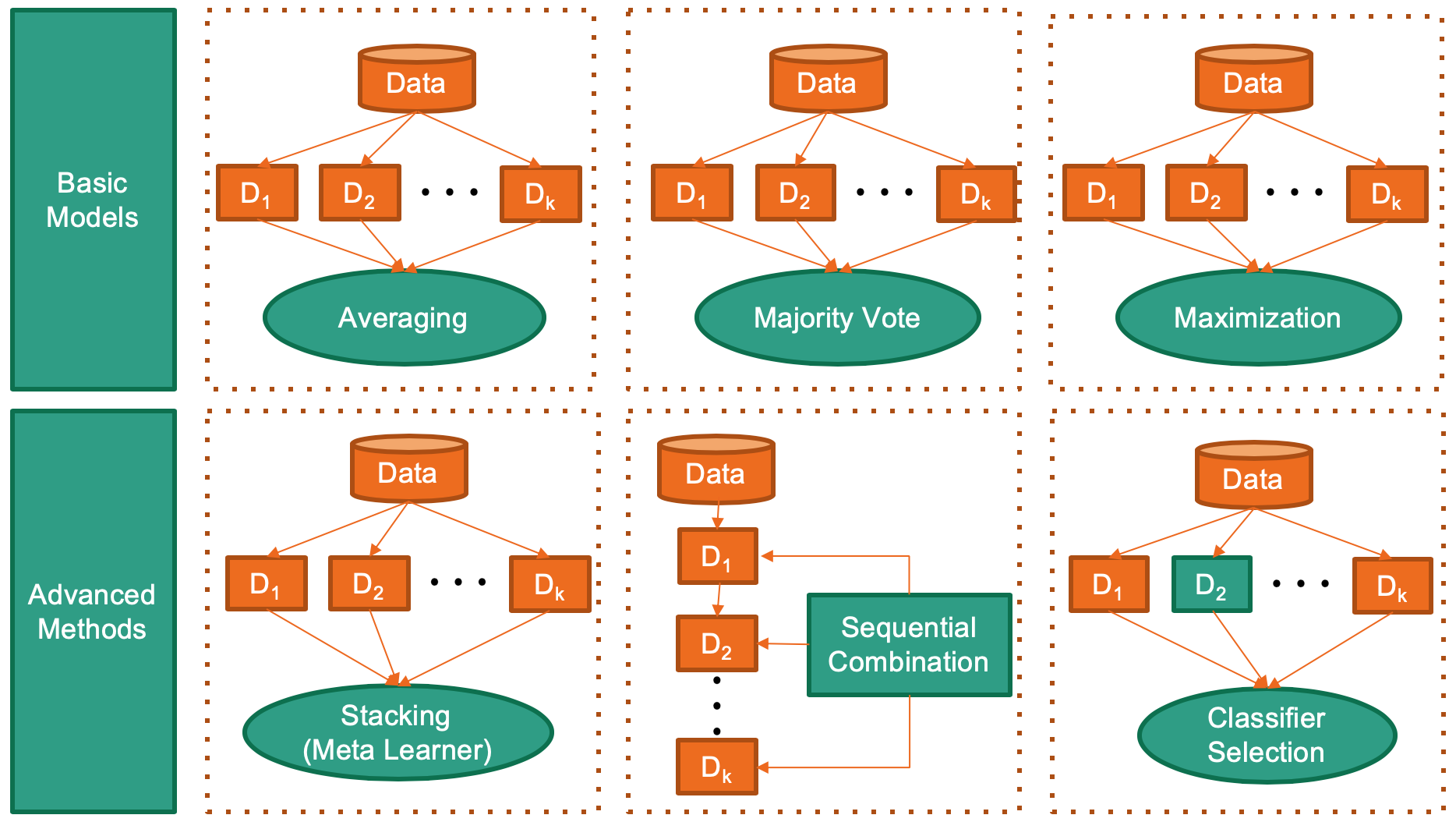

combo library supports the combination of models and score from key ML libraries such as scikit-learn, xgboost, and LightGBM, for crucial tasks including classification, clustering, anomaly detection. See figure below for some representative combination approaches.

combo is featured for:

Unified APIs, detailed documentation, and interactive examples across various algorithms.

Advanced and latest models, such as Stacking/DCS/DES/EAC/LSCP.

Comprehensive coverage for classification, clustering, anomaly detection, and raw score.

Optimized performance with JIT and parallelization when possible, using numba and joblib.

API Demo:

from combo.models.classifier_stacking import Stacking

# initialize a group of base classifiers

classifiers = [DecisionTreeClassifier(), LogisticRegression(),

KNeighborsClassifier(), RandomForestClassifier(),

GradientBoostingClassifier()]

clf = Stacking(base_estimators=classifiers) # initialize a Stacking model

clf.fit(X_train, y_train) # fit the model

# predict on unseen data

y_test_labels = clf.predict(X_test) # label prediction

y_test_proba = clf.predict_proba(X_test) # probability prediction

Citing combo:

combo paper is published in AAAI 2020 (demo track). If you use combo in a scientific publication, we would appreciate citations to the following paper:

@inproceedings{zhao2020combo,

title={Combining Machine Learning Models and Scores using combo library},

author={Zhao, Yue and Wang, Xuejian and Cheng, Cheng and Ding, Xueying},

booktitle={Thirty-Fourth AAAI Conference on Artificial Intelligence},

month = {Feb},

year={2020},

address = {New York, USA}

}

or:

Zhao, Y., Wang, X., Cheng, C. and Ding, X., 2020. Combining Machine Learning Models and Scores using combo library. Thirty-Fourth AAAI Conference on Artificial Intelligence.

Key Links and Resources:

awesome-ensemble-learning (ensemble learning related books, papers, and more)

API Cheatsheet & Reference¶

Full API Reference: (https://pycombo.readthedocs.io/en/latest/api.html). The following APIs are applicable for most models for easy use.

combo.models.base.BaseAggregator.fit(): Fit estimator. y is optional for unsupervised methods.combo.models.base.BaseAggregator.predict(): Predict on a particular sample once the estimator is fitted.combo.models.base.BaseAggregator.predict_proba(): Predict the probability of a sample belonging to each class once the estimator is fitted.combo.models.base.BaseAggregator.fit_predict(): Fit estimator and predict on X. y is optional for unsupervised methods.

For raw score combination (after the score matrix is generated), use individual methods from “score_comb.py” directly. Raw score combination API: (https://pycombo.readthedocs.io/en/latest/api.html#score-combination).

Implemented Algorithms¶

combo groups combination frameworks by tasks. General purpose methods are fundamental ones which can be applied to various tasks.

Class/Function |

Task |

Algorithm |

Year |

Ref |

|---|---|---|---|---|

General Purpose |

Average & Weighted Average: average across all scores/prediction results, maybe with weights |

N/A |

[AZho12] |

|

General Purpose |

Maximization: simple combination by taking the maximum scores |

N/A |

[AZho12] |

|

General Purpose |

Median: take the median value across all scores/prediction results |

N/A |

[AZho12] |

|

General Purpose |

Majority Vote & Weighted Majority Vote |

N/A |

[AZho12] |

|

Classification |

SimpleClassifierAggregator: combining classifiers by general purpose methods above |

N/A |

N/A |

|

Classification |

DCS: Dynamic Classifier Selection (Combination of multiple classifiers using local accuracy estimates) |

1997 |

[AWKB97] |

|

Classification |

DES: Dynamic Ensemble Selection (From dynamic classifier selection to dynamic ensemble selection) |

2008 |

[AKSBJ08] |

|

Classification |

Stacking (meta ensembling): use a meta learner to learn the base classifier results |

N/A |

[AGor16] |

|

Clustering |

Clusterer Ensemble: combine the results of multiple clustering results by relabeling |

2006 |

[AZT06] |

|

Clustering |

Combining multiple clusterings using evidence accumulation (EAC) |

2002 |

[AFJ05] |

|

Anomaly Detection |

SimpleDetectorCombination: combining outlier detectors by general purpose methods above |

N/A |

[AAS17] |

|

Anomaly Detection |

Average of Maximum (AOM): divide base detectors into subgroups to take the maximum, and then average |

2015 |

[AAS15] |

|

Anomaly Detection |

Maximum of Average (MOA): divide base detectors into subgroups to take the average, and then maximize |

2015 |

[AAS15] |

|

|

Anomaly Detection |

XGBOD: a semi-supervised combination framework for outlier detection |

2018 |

[AZH18] |

Anomaly Detection |

Locally Selective Combination (LSCP) |

2019 |

[AZNHL19] |

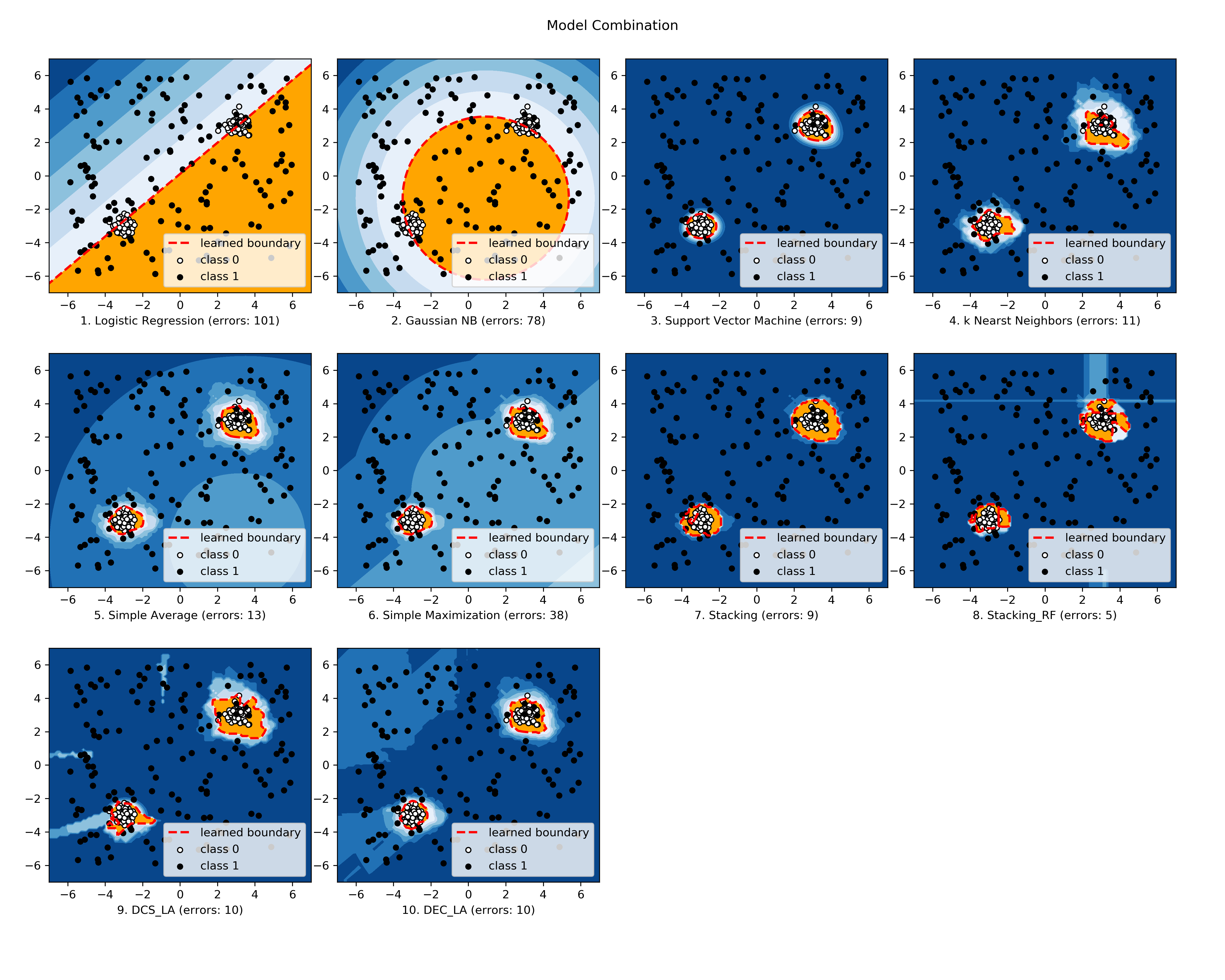

The comparison among selected implemented models is made available below (Figure, compare_selected_classifiers.py, Interactive Jupyter Notebooks). For Jupyter Notebooks, please navigate to “/notebooks/compare_selected_classifiers.ipynb”.

Development Status¶

combo is currently under development as of Feb, 2020. A concrete plan has been laid out and will be implemented in the next few months.

Similar to other libraries built by us, e.g., Python Outlier Detection Toolbox (pyod), combo is also targeted to be published in Journal of Machine Learning Research (JMLR), open-source software track. A demo paper has been presented in AAAI 2020 for progress update.

Watch & Star to get the latest update! Also feel free to send me an email (zhaoy@cmu.edu) for suggestions and ideas.

References

- AAS15(1,2)

Charu C Aggarwal and Saket Sathe. Theoretical foundations and algorithms for outlier ensembles. ACM SIGKDD Explorations Newsletter, 17(1):24–47, 2015.

- AAS17

Charu C Aggarwal and Saket Sathe. Outlier ensembles: An introduction. Springer, 2017.

- ABK07

Robert M Bell and Yehuda Koren. Lessons from the netflix prize challenge. SIGKDD Explorations, 9(2):75–79, 2007.

- AFJ05

Ana LN Fred and Anil K Jain. Combining multiple clusterings using evidence accumulation. IEEE transactions on pattern analysis and machine intelligence, 27(6):835–850, 2005.

- AGor16

Ben Gorman. A kaggler's guide to model stacking in practice. Available at http://blog.kaggle.com/2016/12/27/a-kagglers-guide-to-model-stacking-in-practice, 2016.

- AKSBJ08

Albert HR Ko, Robert Sabourin, and Alceu Souza Britto Jr. From dynamic classifier selection to dynamic ensemble selection. Pattern recognition, 41(5):1718–1731, 2008.

- ARPN20

Sebastian Raschka, Joshua Patterson, and Corey Nolet. Machine learning in python: main developments and technology trends in data science, machine learning, and artificial intelligence. arXiv preprint arXiv:2002.04803, 2020.

- AWKB97

Kevin Woods, W. Philip Kegelmeyer, and Kevin Bowyer. Combination of multiple classifiers using local accuracy estimates. IEEE transactions on pattern analysis and machine intelligence, 19(4):405–410, 1997.

- AZH18

Yue Zhao and Maciej K Hryniewicki. XGBOD: improving supervised outlier detection with unsupervised representation learning. In 2018 International Joint Conference on Neural Networks, IJCNN 2018, 1–8. IEEE, 2018. URL: https://doi.org/10.1109/IJCNN.2018.8489605, doi:10.1109/IJCNN.2018.8489605.

- AZNHL19

Yue Zhao, Zain Nasrullah, Maciej K Hryniewicki, and Zheng Li. LSCP: locally selective combination in parallel outlier ensembles. In Proceedings of the 2019 SIAM International Conference on Data Mining, SDM 2019, 585–593. Calgary, Canada, May 2019. SIAM. URL: https://doi.org/10.1137/1.9781611975673.66, doi:10.1137/1.9781611975673.66.

- AZNL19

Yue Zhao, Zain Nasrullah, and Zheng Li. Pyod: a python toolbox for scalable outlier detection. Journal of Machine Learning Research, 20:1–7, 2019.

- AZho12(1,2,3,4)

Zhi-Hua Zhou. Ensemble methods: foundations and algorithms. Chapman and Hall/CRC, 2012.

- AZT06

Zhi-Hua Zhou and Wei Tang. Clusterer ensemble. Knowledge-Based Systems, 19(1):77–83, 2006.